What is the problem you are having with rclone?

I used to run rclone via Rclone Browser on Windows, which performed very well on my old 2012 desktop (i.e. it would max out my gigabit at around 100-105MB/s while not using a huge amount of RAM/CPU).

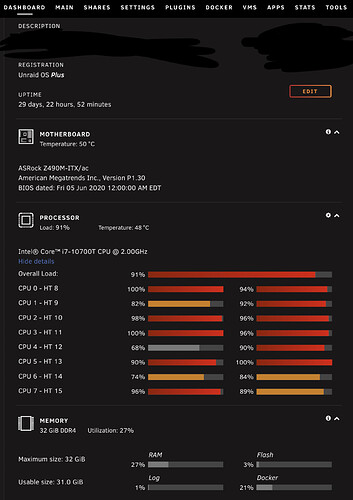

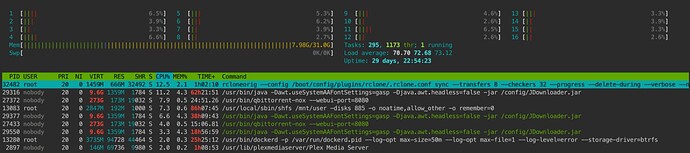

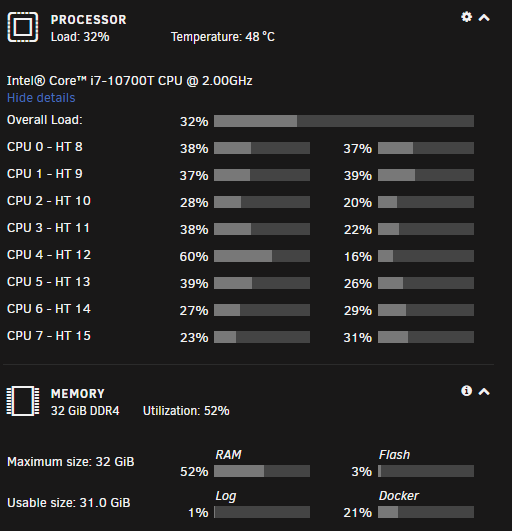

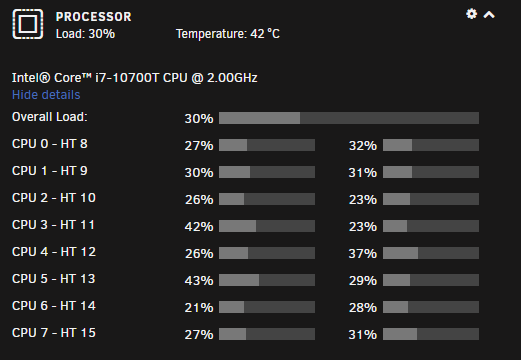

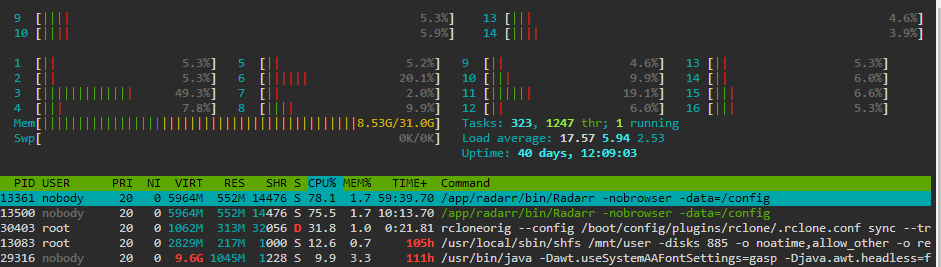

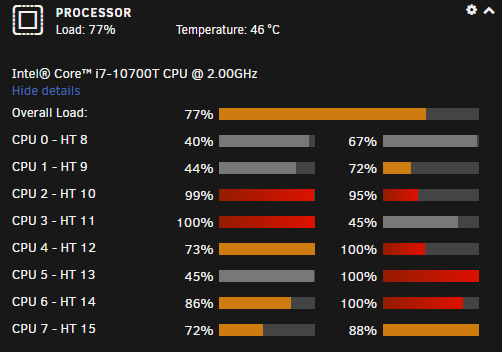

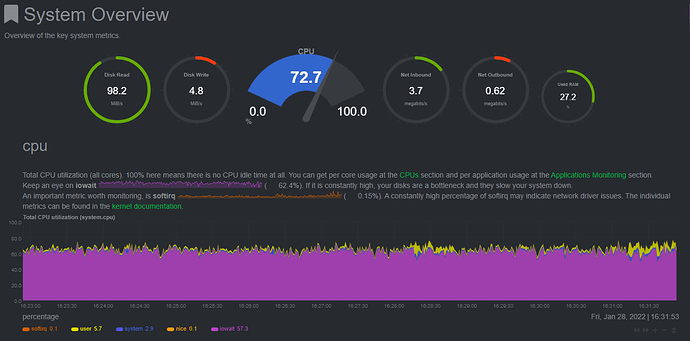

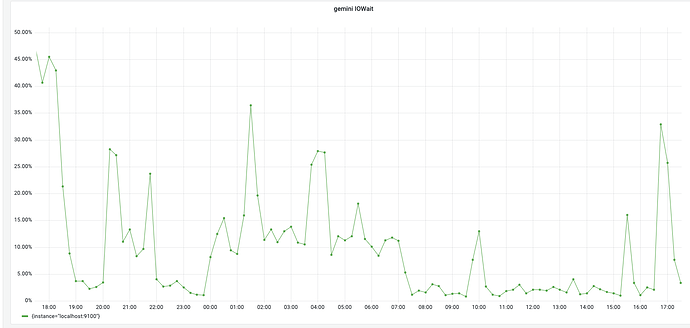

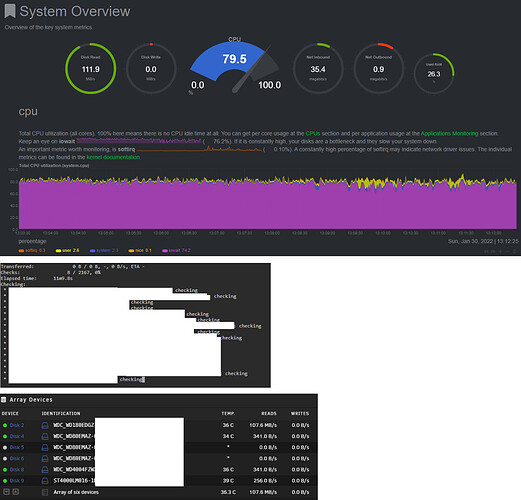

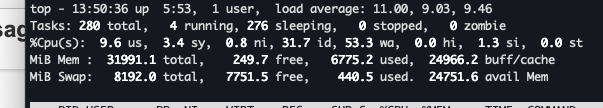

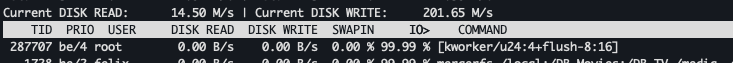

I moved over from Windows to Unraid and installed the rclone plugin and now run all my rclone commands via unraid web terminal. I used to run 16 transfers 32 checkers on that 2012 desktop windows installation, and here I’m running 4 transfers / 16 checkers and it’s causing 100% usage on Unraid, which makes accessing any other docker containers running on my Unraid build basically unusable. Alongside this, the upload speed is pitiful at ~5MB/s. I am able to download files from my GD to the local array at saturated gigabit speeds, but upload performance seems to be quite horrendous as well as CPU usage extremely high. RAM seems okay.

Any idea what i can do to fix this? I would like to first optimize my rclone command running via Unraid web terminal so it isn’t just max’ing my CPU for days while trying to upload a few TB of files and also optimize my upload speed. See below for some pictures of usage.

Run the command 'rclone version' and share the full output of the command.

rclone v1.58.0-beta.5930.b4ba7b69b

- os/version: slackware 14.2+ (64 bit)

- os/kernel: 5.10.28-Unraid (x86_64)

- os/type: linux

- os/arch: amd64

- go/version: go1.17.6

- go/linking: static

- go/tags: none

Which cloud storage system are you using? (eg Google Drive)

Google Drive

The command you were trying to run (eg rclone copy /tmp remote:tmp)

rclone sync --transfers 8 --checkers 32 --progress --delete-during --verbose --no-update-modtime --contimeout 60s --timeout 300s --retries 3 --low-level-retries 10 --drive-chunk-size=64M --drive-upload-cutoff=64M --stats 1s --stats-file-name-length 0 --fast-list --drive-acknowledge-abuse "/mnt/user/Personal" "GD:GD/Personal"

The rclone config contents with secrets removed.

[GD]

type = drive

client_id = xxx.apps.googleusercontent.com

client_secret = xxx

token = {"access_token":"xxx","token_type":"Bearer","refresh_token":"xxx","expiry":"xxx"}