bankzy

April 22, 2021, 7:15am

1

Hi,

I'm trying to setup a weekly cronjob for rclone within my Vesta CP to my external cloud google drive and also to do mysql dumps weekly. Can someone direct me the best possible method to achieve such a thing?

The rclone command I have currently working in my SSH is

rclone copy --update --verbose --transfers 30 --checkers 8 --contimeout 60s --timeout 300s --retries 3 --low-level-retries 10 --stats 1s "/home/site/pubic_html" "gdrive:public_html"

Just trying to follow the question as you'd just put it into cron if that's working for you.

You have newer files that you don't want to overwrite in the destination?

-u, --update Skip files that are newer on the destination.

Just trying to figure out why you have that.

bankzy

April 22, 2021, 12:42pm

3

Yeah, I'm trying to setup this cronjob so it's an offsite backup. So only copies files are new.

That flag means if you have a file on the source and the destination has a newer file, it won't overwrite it.

bankzy

April 22, 2021, 12:45pm

5

Is this the best command for doing weekly offsite backups or am I doing it wrong?

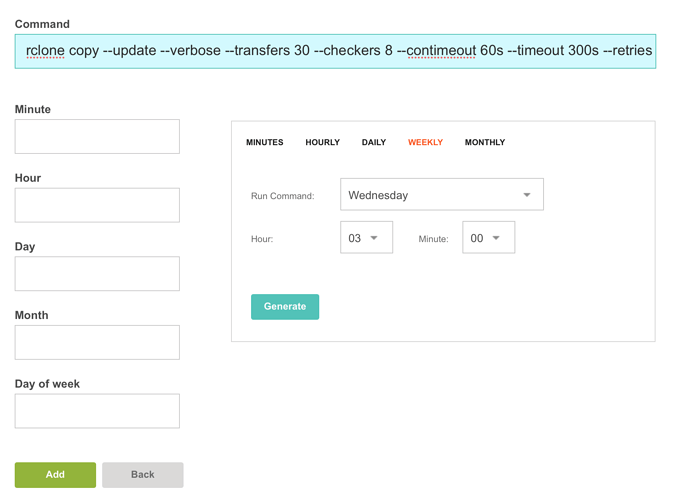

I'm assuming all I would need to do to add this command in the vestacp cron jobs like so?

I'd start with no flags and use the defaults.

bankzy

April 22, 2021, 12:47pm

7

as in?:

rclone copy "/home/site/pubic_html" "gdrive:public_html"

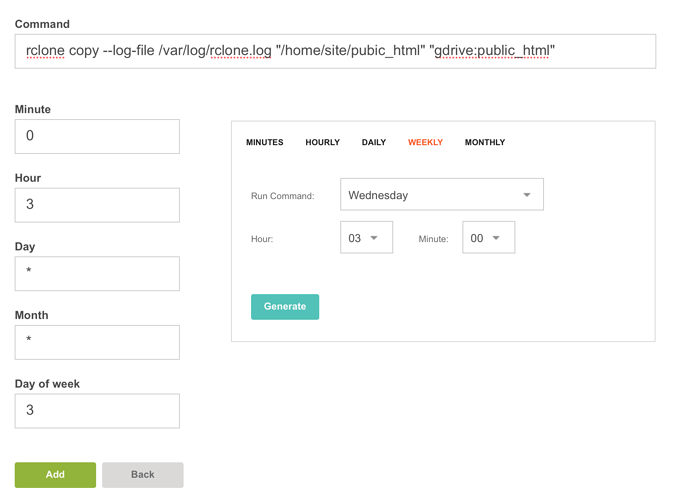

Sure. You can decide if you want a log file or not, and add that in.

--log-file /some/log/rclone.log

bankzy

April 22, 2021, 12:50pm

9

So let's say I wanted to run this command every Wednesday in Vesta CP like so:

I wouldn't have to worry about duplicate files being copied to my google drive with the cronjob?

The way copy works is that it uploads/updates any new files from the source to the destination. Not sure what you mean by duplicates.

bankzy

April 22, 2021, 12:53pm

11

Sorry dude, I meant the copy wouldn't reupload files that have already been copied to the google drive destination

If you have the same file and try to upload it:

felix@gemini:~$ rclone copy /etc/hosts GD:

felix@gemini:~$ rclone copy /etc/hosts GD: -vv

2021/04/22 08:54:32 DEBUG : Using config file from "/opt/rclone/rclone.conf"

2021/04/22 08:54:32 DEBUG : rclone: Version "v1.55.0" starting with parameters ["rclone" "copy" "/etc/hosts" "GD:" "-vv"]

2021/04/22 08:54:32 DEBUG : Creating backend with remote "/etc/hosts"

2021/04/22 08:54:32 DEBUG : fs cache: adding new entry for parent of "/etc/hosts", "/etc"

2021/04/22 08:54:32 DEBUG : Creating backend with remote "GD:"

2021/04/22 08:54:32 DEBUG : hosts: Size and modification time the same (differ by -813.634µs, within tolerance 1ms)

2021/04/22 08:54:32 DEBUG : hosts: Unchanged skipping

2021/04/22 08:54:32 INFO :

Transferred: 0 / 0 Bytes, -, 0 Bytes/s, ETA -

Checks: 1 / 1, 100%

Elapsed time: 0.4s

2021/04/22 08:54:32 DEBUG : 4 go routines active

felix@gemini:~$

It doesn't as it notes the source and destination already match.

bankzy

April 22, 2021, 12:56pm

13

Thanks so much for clarification!

system

June 22, 2021, 8:56am

14

This topic was automatically closed 60 days after the last reply. New replies are no longer allowed.

Do you have any advice for what command I could pull for doing weekly MYSQL database dumps and rclone to my google drive?

Do you have any advice for what command I could pull for doing weekly MYSQL database dumps and rclone to my google drive?