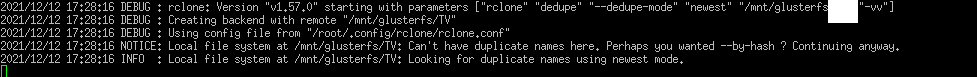

My glusterfs mount has duplicated files, and when running rclone dedupe it doesn't find all the duplicates by name. It complains about being a local file system but runs anyway.

Command:

rclone dedupe --dedupe-mode newest /mnt/glusterfs -vv

rclone v1.57

It runs further but it doesn't delete all the duplicates.

asdffdsa

December 12, 2021, 8:40pm

2

hi,

based on that debug log, looks like glusterfs, as most file systems, cannot have duplicate file names in the same folder.

i believe that by default, rclone looks for dedupes based on filename, which is quick and easy.\

to dedupe based on hash is slow and takes a lot of system resources and must be explicitly enabled using --by-hash

I have checked, and there is duplicate files with the same name. So I want to run checking with filenames not hash

also hash wouldn't work since the files have different sizes, because they are corrupted

asdffdsa

December 12, 2021, 8:57pm

5

not a linux expert, but i thought that like most//all local file systems cannot handle duplicate names,

perhaps glusterfs does can handle duplicate names, but once mounted, linux cannot handle it.

gdrive remotes can have duplicate file names, and rclone dedupe can work on that.rclone mount of that gdrive remote, rclone cannot display the duplicate filenames, since the linux file system cannot.

I can see the duplicate files with ls, on the glusterfs mount

asdffdsa

December 12, 2021, 9:02pm

7

can you post the ouput of ls on a single folder with multiple files with the exact same filenames.

I have ran rclone deduple on the folder like 4 times by now and it didn't clea this duplicate.

It's weird because every run it seems to find some stuff, but never all at once

asdffdsa

December 12, 2021, 9:11pm

9

really do not know as i thought local file systems could not have duplicated filenames in the same folder.

however, there are many dedupe tools, have you tried one of them?

Upon further testing, it seems rclone tries to delete it but fails. Deleting with rm, the same file, it works

system

January 11, 2022, 10:06pm

12

This topic was automatically closed 30 days after the last reply. New replies are no longer allowed.