What is the problem you are having with rclone?

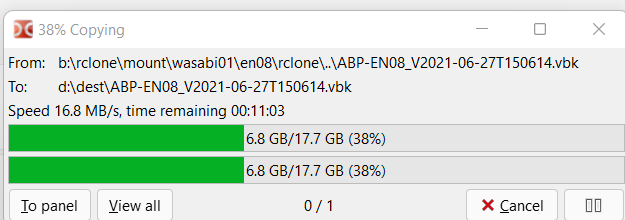

My home download is about 500mbps/62.5MB/s. When downloading files from a Google Drive share I'm getting reasonable speeds, but nothing near the theoretical max.

Run the command 'rclone version' and share the full output of the command.

Docker container so:

rclone v1.57.0

- os/version: alpine 3.14.2 (64 bit)

- os/kernel: 5.4.0-91-generic (x86_64)

- os/type: linux

- os/arch: amd64

- go/version: go1.17.2

- go/linking: static

- go/tags: none

Which cloud storage system are you using? (eg Google Drive)

Google Drive

The command you were trying to run (eg rclone copy /tmp remote:tmp)

mount gcrypt: /data/gcrypt --allow-other --dir-cache-time 1000h --log-level INFO --poll-interval 15s --umask 000 --user-agent oop --rc --rc-addr :5572 --cache-dir=/cache --vfs-cache-mode full --vfs-cache-max-size 250G --vfs-cache-max-age 2000h --vfs-read-ahead 2G --vfs-read-chunk-size-limit 0 --vfs-cache-poll-interval 5m --allow-non-empty

The rclone config contents with secrets removed.

I think this is pretty standard, but here it is:

[gcrypt]

type = crypt

remote = gdrive:media

filename_encryption = standard

directory_name_encryption = true

password =

password2 =

[gdrive]

type = drive

scope = drive

server_side_across_configs = true

client_id =

client_secret =

team_drive =

token =

A log from the command with the -vv flag

Can't do this at the moment - I have it on INFO and there's just stuff about vfs cache cleaned.

Extra Info

I tested the read speed of the drive mount with dd if={a rather large file} of=/dev/null bs=1G count=1 and the output is:

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 55.2374 s, 19.4 MB/s

I tested the speed of the drive the cache is on with dd if=/dev/zero of=test.img bs=1G count=1 oflag=direct and the output is:

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 6.65512 s, 161 MB/s

Testing reads with dd if=test.img of=/dev/null bs=1G yields:

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 6.65512 s, 161 MB/s

My cache drive is a HDD, but with these figures I wouldn't have thought it would be an issue as cache writing should also be sequential like dd?

I ran the first command with a few files and it always seemed to cap out at 20MB/s with typical figures around 15MB/s. I never run it on the same file twice due to files being cached.

Could this be a crypt problem, with my CPU not being able to handle it fast enough? I've looked at htop when reading and the CPU seems to spike to a max of 40% with it being quite periodic (because of the chunks?). I know there's a --drive-chunk-size, but I believe this only applies to uploads?

The HDD tests are run on the host, not within the docker container. When I run dd in alpine it seems to miss the rate output.

Any ideas? I may be missing something here.