i did that -- i'm using rclone on a seedbox. what's been happening is that pCloud access violates and closes itself afterwards.

More than US$1,000 a year. That's for 1 user. Now I'm trying to get it all off.

geesh.  You can get a legit 5 user gsuite for less than that.

You can get a legit 5 user gsuite for less than that.

well. GSuite has it's own share of problem. Leaky bucket, NDA compromises. very easy to share and not get full ownership of data.

so for 5 users at $12.00 = $60.00 per month.

for that i would really get unlimited storage?

yes but nothing is really ever 'unlimited'. But probably keep this thread on topic for @moo.

the problem isn't unlimited storage. The problem is rights. Let's say for example, you have a disgruntled employee or scammer who wants to move all the files out of Google Drive and steal trade secret, tell lies, copy all the files out to his personal GDrive, or move out files from company's drive, what defenses would you have?

From there you can understand why GDrive isn't ideal. So are many of the cloud-storage providers out there.

I don't get that. rclone sync "P:\Crypto Folder" remote: -v

If you change from S3 to BackBlaze it gives all errors. Any ideas?

try this

rclone sync "P:\Crypto Folder" remote: -vv which will give debug output.

in my limited testing, i found b2 can be very slow but based on some other forum posters, i would say that b2 is a stable platform that can be trusted.

you can get a free trial at wasabi.com and see what hot storage is all about.

it is a s3 clone and so rclone works great with it.

i have a verizon fios 1Gps connection and i can saturate that on wasabi.

but for me to do that, i had to do a lot of tweaking of my rclone command.

if you want to epitomize b2, you will need to experiment, test and tweak

https://rclone.org/b2/

"Backblaze recommends that you do lots of transfers simultaneously for maximum speed. In tests from my SSD equipped laptop the optimum setting is about --transfers 32"

there are a lot of b2 flags to tweak

--b2-account string Account ID or Application Key ID

--b2-chunk-size SizeSuffix Upload chunk size. Must fit in memory. (default 96M)

--b2-disable-checksum Disable checksums for large (> upload cutoff) files

--b2-download-auth-duration Duration Time before the authorization token will expire in s or suffix ms|s|m|h|d. (default 1w)

--b2-download-url string Custom endpoint for downloads.

--b2-encoding MultiEncoder This sets the encoding for the backend. (default Slash,BackSlash,Del,Ctl,InvalidUtf8,Dot)

--b2-endpoint string Endpoint for the service.

--b2-hard-delete Permanently delete files on remote removal, otherwise hide files.

--b2-key string Application Key

--b2-test-mode string A flag string for X-Bz-Test-Mode header for debugging.

--b2-upload-cutoff SizeSuffix Cutoff for switching to chunked upload. (default 200M)

--b2-versions Include old versions in directory listings.

next related question.

can you access the pCloud Crypto folder from using "pcloud" setting https://rclone.org/pcloud/ ?

i did create a pcloud remote on rclone and i could access the files, they were useless as they were encrypted.

i installed the pcloud software on my computer.

then the files showed up on the p: drive and rclone could access them as local files.

as i demonstrated, it worked well, no errors in limited testing.

related question.

is it possible for rclone to do 1 file at a time, instead of multiple at once?

i think this may solve my problem of copying files from encrypted.

you should spend time reading the docs.

many of your basic questions would be answered.

Thanks for the help.

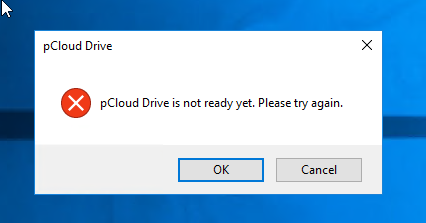

My issues are resolved, except pCloud crashing all the time.

I'm storing like ~ 3.5TB on pCloud and that's like ~ US$900 (2 TB x US$400 - lifetime (former price)) + and US$99/crypto/year/2TB.

I love pCloud, but lately, their software drivers started flaking out and I keep getting CRC, download errors, and critically, I only have 1 file backups of certain files, some of which are very personal. So I lost many files.

So after nearly a week or so, and double-downloading, I've transferred ~ 2.5TB to BackBlaze and ~1.5TB of encrypted pCloud storage left to go.

There were so many errors, like ~ 500MB of erroneous files.

I've lost many files due to pCloud giving copy-errors to RClone. but I'll live with it. They are old photos, files, which I can't get back.

For Wasabi at US$5.99 for 1TB, I'm using BackBlaze so maybe I save a dollar (US$5.99 vs US$5.00), but I'm happy with BackBlaze.

This is the command-line used in case anyone wants to copy pCloud without crypto folder.

rclone.exe copy pcloud: bz2:/BUCKET -P --exclude="Crypto Folder/"**

Beware that you will have to run this many times, like 5 or 6 times to get all your files out. There will be many errors.

Windows: Crypto Folder: you need copy slowly-by-slowly via File Explorer or Windows.

Mac: don't bother. When you use on Mac, after 1TB pCloud will be so slow you can't do anything meaningful. Better rent a Windows VM on the cloud to transfer out all your files.

I'm still in the middle of backing out the Crypto Folder...

This could take possibly another 5 months just to copy out all my files  .

.

This is what happens daily with RClone. The driver hangs and then the P:\ drive gets dismounted.

If anyone has any ideas to move files out using anything - RClone, Explorer without having to get the above mentioned errors on a daily basis, please let me know.

This topic was automatically closed 60 days after the last reply. New replies are no longer allowed.