remote: is an alias. i thought that when posting scripts for other users, it would be easier to understand.

i tried to run the lsjson and pipe that output to a file but i was gettings errors on the command line that did not show in the json file.

so here is the raw ouput for the alias and the actual remote.

both lsf and lsjson

------------start remote:\folder

C:\data\rclone\scripts\rr\other\test>C:\data\rclone\scripts\rclone.exe lsf -R --files-only remote:\folder

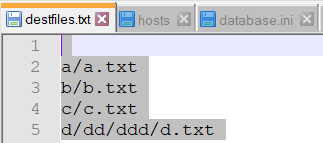

a/a.txt

b/b.txt

c/c.txt

------------end remote:\folder

------------start wasabieast2:aliasremote\folder

C:\data\rclone\scripts\rr\other\test>C:\data\rclone\scripts\rclone.exe lsf -R --files-only wasabieast2:aliasremote\folder

a/a.txt

b/b.txt

c/c.txt

------------end wasabieast2:aliasremote\folder

------------start remote:folder

C:\data\rclone\scripts\rr\other\test>C:\data\rclone\scripts\rclone.exe lsjson -R --files-only remote:folder

[

2020/03/28 14:37:52 NOTICE: : Failed to read metadata: object not found

2020/03/28 14:37:53 NOTICE: : Failed to read metadata: object not found

{"Path":"","Name":".","Size":0,"MimeType":"application/octet-stream","ModTime":"2020-03-28T14:37:52.610400200-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"a/a.txt","Name":"a.txt","Size":22,"MimeType":"text/plain; charset=utf-8","ModTime":"2020-03-28T10:51:27.633838900-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"b/b.txt","Name":"b.txt","Size":2,"MimeType":"text/plain","ModTime":"2020-03-27T17:21:40.772068600-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"c/c.txt","Name":"c.txt","Size":58,"MimeType":"text/plain; charset=utf-8","ModTime":"2020-03-28T10:51:32.639289600-04:00","IsDir":false,"Tier":"STANDARD"}

]

C:\data\rclone\scripts\rr\other\test>echo ------------end remote:folder

------------end remote:folder

------------start wasabieast2:aliasremote\folder

C:\data\rclone\scripts\rr\other\test>C:\data\rclone\scripts\rclone.exe lsjson -R --files-only wasabieast2:aliasremote\folder

[

2020/03/28 14:37:57 NOTICE: : Failed to read metadata: object not found

2020/03/28 14:37:57 NOTICE: : Failed to read metadata: object not found

{"Path":"","Name":".","Size":0,"MimeType":"application/octet-stream","ModTime":"2020-03-28T14:37:57.420412000-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"a/a.txt","Name":"a.txt","Size":22,"MimeType":"text/plain; charset=utf-8","ModTime":"2020-03-28T10:51:27.633838900-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"b/b.txt","Name":"b.txt","Size":2,"MimeType":"text/plain","ModTime":"2020-03-27T17:21:40.772068600-04:00","IsDir":false,"Tier":"STANDARD"},

{"Path":"c/c.txt","Name":"c.txt","Size":58,"MimeType":"text/plain; charset=utf-8","ModTime":"2020-03-28T10:51:32.639289600-04:00","IsDir":false,"Tier":"STANDARD"}

]

------------end wasabieast2:aliasremote\folder