Foritus

October 6, 2018, 1:45pm

1

Hello!

I’m encountering some pretty high memory usage when performing a sync from the local filesystem to a remote encrypted fs (which itself wraps an azure blob storage account). I suspect this may be because I have quite a lot of smallish files to sync. Key info:

Command being run: rclone --config /path/to/my/version/controlled/rclone.conf --checksum --retries 20 --suffix 2018-10-04 --backup-dir azure-encrypted:/backups.history/ --quiet --bwlimit 384k sync /home/backup-user/backups/ azure-encrypted:/backups

Rclone Version: 1.42

Total size of files being sync’ed: 855GB

Number of files being sync’ed: 1,098,342 (1098342 without locale-specific separators)

The backup is using 5.325GB of physical RAM (12.408GB virtual), which seems pretty extreme. Is it caching the file checksums in memory or something?

Any help/insights would be most appreciated

ncw

October 6, 2018, 4:50pm

2

That does seem like a lot of RAM! A sync shouldn't really use very much memory at all.

First I'd try with 1.43.1 or even better the latest beta .

Then you could try memory profiling rclone which would at least tell us what is using up the memory.

Foritus

October 6, 2018, 6:01pm

3

Just tried the same command with v1.43-136-g87e1efa9-beta and it ballooned up to 10GB in about an hour. Will run it now with the profiler enabled and post the results.

Foritus

October 6, 2018, 6:35pm

4

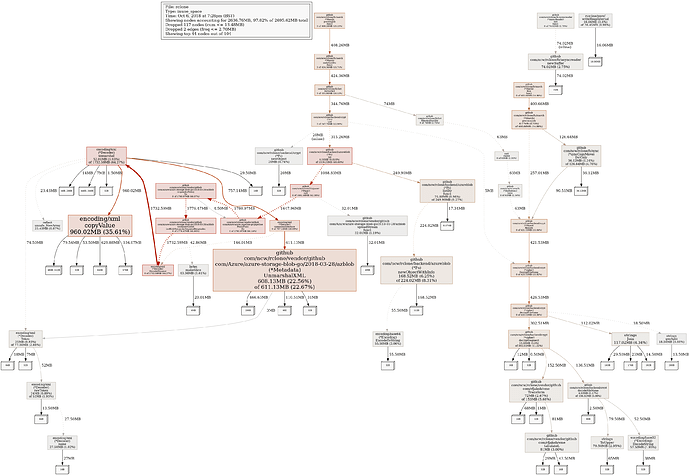

Profiling indicates a lot of leaks in XML related code?

(pprof) text

ncw

October 6, 2018, 10:55pm

5

Thanks for doing the profiling - very helpful

That does look like a bug, probably in the azure-storage-blob-go library rather than rclone…

Can you put the above into a new issue on github and I’ll get the azure blob maintainer sandeepkru to take a look at it - thanks. (Put a link to the forum post too please.)

Foritus

October 7, 2018, 12:00am

6

1 Like