Does anyone know a good local dedupe tool that can take 400,000 files mounted with rclone mount and dedupe them PURELY by size and name?

Dupeguru APPEARS to be able to do this but when I tried it hit 16gb of ram at 97% done 22,000 duplicates found. Then 28gb of ram at 98% done, then I clicked cancel and it was using 31gb of ram and I force closed the task without ever seeing a duplicate file listed.

Was all that ram for GUI reasons? Or was it hashing my files despite me trying my best to ask it not to hash my files?

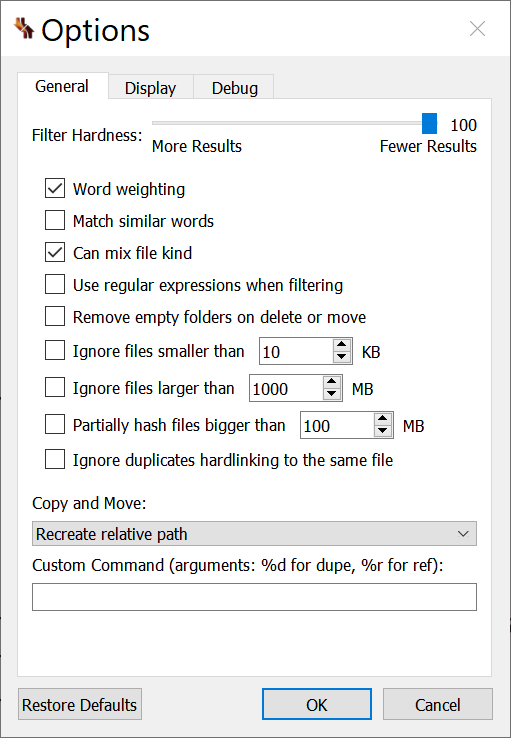

I ran in filename mode with these settings. The whole partially hash setting is what is leaving me worried it was foolishly trying to hash all my files but the documentation on dupeguru is light at explaining this.

I’ve never used dupeguru before though and have no loyalty to it. Rclone’s deduping tool would be fine, except it matches based on full path not merely filename, and my files are stored in foolishly arranged filepaths (that’s the mistake I made that requires me to do all this.)

TLDR: I just want to compare 400,000 filenames and see my duplicates. I want to use name and size. I do not want to use fullpath (rules out rclone) I do not want to make hashes (rules out hashmyfiles) Can someone please make me a recommendation?