What is the problem you are having with rclone?

Just wondering whether or not this is expected behavior. When using --backup-dir with a combine remote that combines crypt and non-crypt (on the same base remote), instead of the files getting server-side moved into the backup-dir in their encrypted form, they get decrypted and copied into the backup-dir, but with metadata that reveals their previous encrypted names.

For example, assume the following setup (configs shared below).

/

├── NormalStuff

│ └── somenormalfolder

├── SecretStuff

│ └── somesecretfolder

│ └── somesecretfile.txt

└── backupdir

Then rename a file and sync:

rclone sync /localstuff/SecretStuff MyCombinedStuff:SecretStuff -Mv --backup-dir MyCombinedStuff:backupdir

2024/01/14 00:31:21 INFO : somesecretfolder/somesecretfile2.txt: Copied (new)

2024/01/14 00:31:23 INFO : somesecretfolder/somesecretfile.txt: Copied (new)

2024/01/14 00:31:24 INFO : somesecretfolder/somesecretfile.txt: Deleted

2024/01/14 00:31:24 INFO : somesecretfolder/somesecretfile.txt: Moved into backup dir

2024/01/14 00:31:24 INFO :

Transferred: 32 B / 32 B, 100%, 10 B/s, ETA 0s

Checks: 3 / 3, 100%

Deleted: 2 (files), 0 (dirs)

Renamed: 1

Transferred: 2 / 2, 100%

Elapsed time: 3.9s

now we have this:

rclone tree MyCombinedStuff:

/

├── NormalStuff

│ └── somenormalfolder

├── SecretStuff

│ └── somesecretfolder

│ └── somesecretfile2.txt

└── backupdir

└── somesecretfolder

└── somesecretfile.txt

6 directories, 2 files

And on the underlying remote, the file is no longer encrypted.

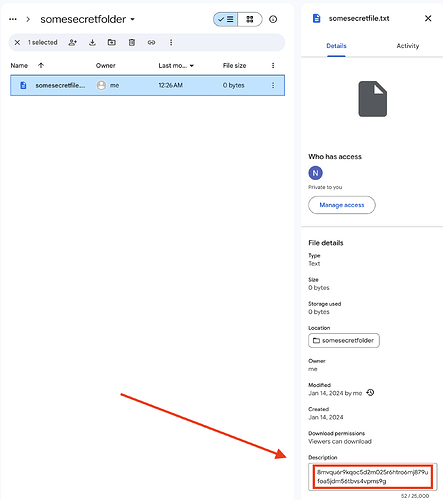

Additionally, on at least Google Drive, the file retains metadata that reveals its former encrypted name:

--backup-dir normally requires server-side move, so my initial assumption was that the base remote would move the files in their existing form. I understand why it's not doing that, and it's easy enough to work around (by putting the backup-dir on the encrypted side) -- just wondering if it's the intended behavior. (I suppose the other way would have problems too, as it would result in mixing crypt with non-crypt?)

Relatedly, I am wondering if this is a mistake, or if I'm somehow misunderstanding it:

The comment suggests it respects --backup-dir, but wouldn't it always be nil? The function it returns is:

func DeleteFileWithBackupDir(ctx context.Context, dst fs.Object, backupDir fs.Fs) (err error) {

which does:

Run the command 'rclone version' and share the full output of the command.

rclone v1.65.1

- os/version: darwin 13.6.3 (64 bit)

- os/kernel: 22.6.0 (arm64)

- os/type: darwin

- os/arch: arm64 (ARMv8 compatible)

- go/version: go1.21.5

- go/linking: dynamic

- go/tags: cmount

Which cloud storage system are you using? (eg Google Drive)

Google Drive, Crypt, Combine

The command you were trying to run (eg rclone copy /tmp remote:tmp)

rclone sync /localstuff/SecretStuff MyCombinedStuff:SecretStuff -Mv --backup-dir MyCombinedStuff:backupdir

The rclone config contents with secrets removed.

[MyCombinedStuff]

type = combine

upstreams = NormalStuff=TestDrive:Test SecretStuff=SecretStuff: backupdir=TestDrive:Backup

[TestDrive]

type = drive

client_id = XXX

client_secret = XXX

scope = drive

token = XXX

team_drive =

root_folder_id = XXX

[SecretStuff]

type = crypt

remote = TestDrive:secretstuff

password = XXX

A log from the command with the -vv flag

Included above.