What is the problem you are having with rclone?

With my personal api I have many quota error. this is overnight:

2021/02/21 05:13:04 ERROR : : error reading destination directory: couldn't list directory: googleapi: Error 403: User Rate Limit Exceeded. Rate of requests for user exceed configured project quota. You may consider re-evaluating expected per-user traffic to the API and adjust project quota limits accordingly. You may monitor aggregate quota usage and adjust limits in the API Console: https://console.developers.google.com/apis/api/drive.googleapis.com/quotas?project=989441034457, userRateLimitExceeded

2021/02/21 05:13:04 ERROR : Encrypted drive 'Media:00 - Programmi': not deleting directories as there were IO errors

2021/02/21 05:13:04 INFO : There was nothing to transfer

2021/02/21 05:13:04 ERROR : Attempt 4/5 failed with 1 errors and: couldn't list directory: googleapi: Error 403: User Rate Limit Exceeded. Rate of requests for user exceed configured project quota. You may consider re-evaluating expected per-user traffic to the API and adjust project quota limits accordingly. You may monitor aggregate quota usage and adjust limits in the API Console: https://console.developers.google.com/apis/api/drive.googleapis.com/quotas?project=989441034457, userRateLimitExceeded

2021/02/21 05:13:11 INFO :

Transferred: 0 / 0 Bytes, -, 0 Bytes/s, ETA -

Elapsed time: 2h47m30.9s

Using the --fast-list option I expected none of this errors.

the folder has 240.848 Files, 69.429 Folders. all already backed out

what's causing this errors?

What is your rclone version (output from rclone version)

rclone v1.53.1

- os/arch: windows/amd64

- go version: go1.15

tried also 1.54 (last version).

the overnight logs are from 1.53.

the other log is from 1.54

Which OS you are using and how many bits (eg Windows 7, 64 bit)

Windows 10 professional 64bit

Which cloud storage system are you using? (eg Google Drive)

Google suite

The command you were trying to run (eg rclone copy /tmp remote:tmp)

N:\rclone\rclone.exe sync "H:\00 - Programmi" Media:"00 - Programmi" --backup-dir=Media:"00 - Backup/00 - Programmi/%mydate%_%mytime%" --fast-list --delete-during --checksum --verbose --transfers %RCLONE_TRANSFERS% --checkers %RCLONE_CHECKERS% --bwlimit %RCLONE_BWLIMIT% --contimeout 60s --timeout 300s --retries 5 --low-level-retries 10 --rc --stats %RCLONE_STATS% --stats-file-name-length 150 --exclude "desktop.ini" --exclude "*.log" --exclude "*.db-wal" --exclude "programdata/cache/**" --copy-links --log-file "r:\rclone scripts\media_%mydate%_%mytime%.log"

The rclone config contents with secrets removed.

[gdrive]

type = drive

scope = drive

root_folder_id = 17MzJZqHdsxdkEzWdWl8gxR-o4bfByJd1

token = {"access_token":"xxx","token_type":"Bearer","refresh_token":"xxx","expiry":"2021-02-21T09:41:32.6487738+01:00"}

client_id = xxxxxx

client_secret = xxxxxx

[Media]

type = crypt

remote = gdrive:01

filename_encryption = standard

directory_name_encryption = true

password = xxxxxx

A log from the command with the -vv flag

overnight log:

media_20210221_ 20001.log (315.3 KB)

restarted with -vv flag log only for the biggest folder:

media_20210221_ 91642.log (645.9 KB)

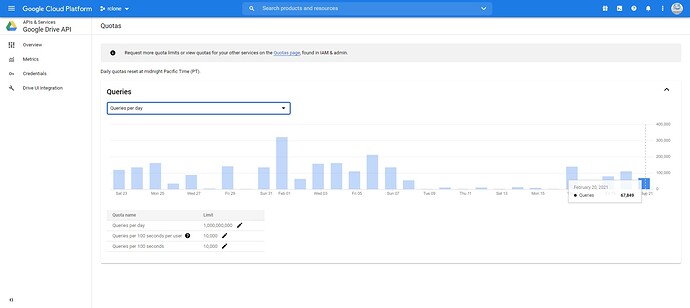

additionally here's my daily quota:

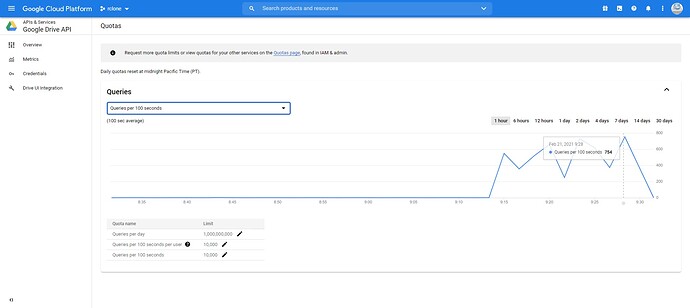

100secs usage: