Google cloud compute trial some speed using it to move cloud to cloud

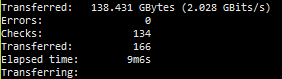

2 rclone running 30 transfers

the speed is sexy…

I was thinking about using it to sync gdrive encrypted with my gdrive unencrypted.

How much would be the cheapest instance for it.

Nice! Yeah how much do instances run?

cheapests is like around 7$/m but the more threads seems to help get better speeds but only when doing google to google. they go from like 6-140$ for the cpu high setups as i am just using the trial i won’t be using it long just wanna clone clouds as back up fast then can use say a cheap 100mbps server for more often syncing of the clouds so they are all the same.

This was earlier today on Google cloud compute. I was pulling off of an amazon cloud drive with Crypt and uploading to google drive unencrypted, it was crazy. I forgot to get a cap of nethogs but I sure was watching.

Dont do it with Amazon as the will lock your account on high bandwith usage.

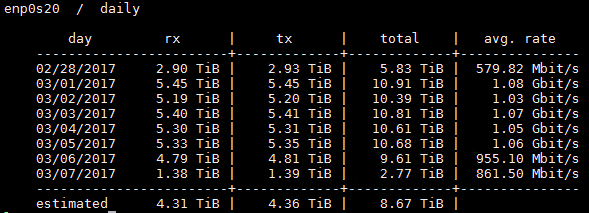

Im syncing all my clouds on online.net server and after of couple of days of full 1Gbit usage my account got locked. I solved it by calling support but they warn me that they wont unlock me on continuous high bandwith usage.

While I was syncing clouds I also uploaded in average of 80MB + all the downloads done by plex server.

p.s. On my sync server now i limited amazon to 50MB, let see if I get locked again.

yeah after this last lock i’m done with ACD too much headache really. i’ll keep it as a back up but honestly with a nice plex scanner script drive is the way to go

Alright thanks for the heads up, I’ll change that. @MartinBowling what script are you using? I think I’m now going to go the same route with using ACD as a backup. I got a ban yesterday, that I believe was because of plex.

@Matt_H used these scripts from @ajki as a start

https://github.com/ajkis/scripts/blob/master/plex/plexgeneratefolderlist

https://github.com/ajkis/scripts/blob/master/plex/plexrefreshfolderlist

So you’re using rclone mount with gdrive as opposed to node-gdrive-fuse by utilizing this script?

Curious what caused you to switch and how rclone has performed by comparison.

@Seigele I have one node-gdrive-fuse and one rclone mount right now comparing the two. Initial reactions are that the rclone mount seems to perform a little better, I notice most during large files 20GB or over. the rclone mount needs less baby sitting

I’m not a programmer, but looking at ajki’s scripts it appears to me that in order for this to work you’d need to be downloading and uploading on the same server, correct?

I’m currently using sonarr/radarr to auto update my libraries, and use sshfs to connect the files waiting to be uploaded to my Plex server. I like keeping these two servers separate for obvious reasons, but just based on my setup here, do you think I’d benefit from using rclone + gdrive if I haven’t had any issues with node-gdrive-fuse?

My mount hasn’t dropped in weeks and the performance is miles ahead of ACD, so I’m curious if switching over is worth the effort as things stand now.

Thanks

node-gdrive-fuse is very stable, but unfortunately it’s discountine and haven’t updated over a year, I was using it for few days and it was very fast, the only problem it caused me was that sometimes wasn’t showing me new file in the database and I had to unmount it and delete the database and mount it back again.

Right now I’m using rclone mount for my GDrive and it’s working good so far and the buffer it’s good, I haven’t be able to put too much pressure on it and see how it will stay strong under pressure.

acd_cli is also running on my computer, it’s not fast as I think, but so far, it’s very stable and never drop the mount for me.

As for switching, I start my mount on one location with ocaml-fuse and sync the library in Plex and then I mount that same location to node-gdrive-fuse and now to rclone, so it wasn’t a hassle and just changing the code I was using, unless you want some encryption, which I’m not using.

As for switching, I start my mount on one location with ocaml-fuse and sync the library in Plex and then I mount that same location to node-gdrive-fuse and now to rclone, so it wasn’t a hassle and just changing the code I was using, unless you want some encryption, which I’m not using.

Sorry but I’m not sure exactly what this means, could you clarify? Are you using multiple mounts on the same google account in order to avoid getting banned? It sort of seems like that’s what you’re saying.

Also if you’re only using rclone would you mind going into more detail about how you went about setting it up and ensuring you don’t get banned?

@Seigele his scripts are for separate boxes one for downloading and syncing to clouds and one with plex on them.

I wouldn’t bother to switch now then if I were you, I think @ncw said caching was coming to Drive fairly soon, so I would at least wait till then before even thinking about it and then wait till some other people test it hehe

Well, I haven’t got banned neither from Plex Cloud or rclone, they both running my Google Drive, so far they doing ok.

( I have Plex Cloud, and then I have my own Plex locally which runs on and_cli (Amazon) and Rclone (GDrive) )

As for setup, I only have mount, I don’t have sync or anything else.

So for me it was easy to just use one mount point in each application so my Plex still thinks the data comes from there, cause it doesn’t care what app you use to mount it, as long as they come from same location.

Sorry for confusion.

Gotcha, how big is you’re library if you’re able to use google drive + rclone as is without any bans?

As of right now it’s 60TB

You’re giving me more questions than answers lol. How are you using vanilla rclone & google drive without getting banned when it scans?

Haha, that was funny, honestly I’m not good at this, but this what I’m using to mount my drive:

n=1 ;

while true ; do

if [ `find /Users/danial/mtng -prune -empty` ] ; then

echo "=============================================="

echo "System were down $(date)"

echo "=============================================="

umount /Users/danial/mtng

rclone mount --allow-other --dir-cache-time 60m --buffer-size 200M --max-read-ahead 200M --retries 3 --timeout 30s -vv gdrive: ./mtng

else

echo "=============================================="

echo "System were ok at $(date)"

echo "=============================================="

umount mtng /Users/danial/mtng

rclone mount --allow-other --dir-cache-time 60m --buffer-size 200M --max-read-ahead 200M --retries 3 --timeout 30s -vv gdrive: ./mtng

fi ;

n=$((n+1)) ;

done

I have this code as a sh file (drive.sh) and I just run that and nothing else.

Technically there is something wrong with my if statement in there, cause I know, but the mount hasn’t drop yet so I can see which part is wrong