Any linux thing works like that. You can delete a file in use but the space isn't returned until the process using the file is terminated.

AAA I didnt know it  Thanks Thanks

Thanks Thanks

Hello, perhaps I am doing something bad....

I am using.

rclone mount team1pruebacachevfs:/ /home/ubuntu/team1pruebacache2/ --allow-other --vfs-read-ahead 10G --vfs-cache-mode full --vfs-cache-max-age 48h --vfs-cache-max-size 10G

on rclone version: rclone v1.52.3-294-g61c7ea40-beta

Works fantastic, store the file on cache great! but I have defined 48 hous (--vfs-cache-max-age 48), but on morning I test it, play file, and the file download to cache, I tst the cache on night and right it is there, but morning next day the cache is empty...

I am using worng parameter??

I have test scheduled jobs on cron and disable all but same problem.

Lot lot of Thanks.

You'd have to share a debug log and reproduce the issue.

Ok, I mount with logfile to see and will post it....

Lot of thanks.

If the file size is exactly 10G, then even a small read of another file will cause the first file to be removed from cache. This is because you have the cache max-size too as 10G.

Generally, it can be greater depending on how much disk is available.

Hello, I am testing with a file of 2.2 G other 6.6 G and other 60 Gb, this last I have download a part and stop playing, the size of the cache is 9.1G from now to tomorrow I will not touch to see the log.... And post comment.

I have 45G free on internal storage.

Lot of thanks.

Rather than guessing, a log file would shed light on it.

I must wait tomorrow, if the error persist...

Hello, I ahve test and now works fine!, I dotn understand why but it works fine. Now I have the problem bwlimit , can I use this comand?

rclone mount -vv team1:/ /home/ubuntu/team1/ --allow-other --vfs-read-ahead 50G --vfs-cache-mode full --vfs-cache-max-age 120h --vfs-cache-max-size 50G --bwlimit=10M --log-file=/home/ubuntu/LOGvfscache-Master.txt

I use but dosnt limit the download... may be I am using this bad?

Thanks

Only upload is limited

Aa ok

Lot of thanks

That's not the case, bwlimit applies to both directions.

You'd have to share more details and a debug log of what you are seeing.

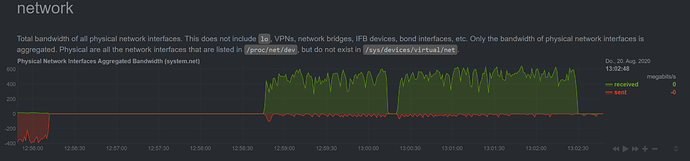

You'd have to include a full log file as that clip has no traffic and nothing going on so something other than rclone is using your bandwidth based on the snippet you have shared.

I just tried the latest beta and from what Netdata tells me rclone loads the the complete file and that's unexpected for me.

My mount command:

--allow-other --dir-cache-time 8760h --poll-interval 0 --vfs-cache-mode full --vfs-cache-max-size 250G --vfs-cache-max-age 8760h --drive-use-trash=false --log-level DEBUG --log-file /opt/rclone/rclone.log upload: /media/cry

I'm quite sure I am missing something here, so any hint is welcome.

€dit: Besides this, it seems to be quite stable.

Can you please share a full debug log as an example.

Here you go:

https://1drv.ms/u/s!AoPn9ceb766mg4Vj5Bqc9_SAceDwHA?e=2aatqx

It's the log from that particular time frame.

Can you share the whole thing? Missing the version and parameters that you used.

Any chance we could have a dynamic way of using the cache with multiple remotes? Specifying an overall amount of disk space they can use without hard capping every remote to a portion of it.

I'm testing this with an rclone union defined as upstreams=/local/data union-remote:

I mount with mount --vfs-cache-mode=full --vfs-max-cache-size=250G --vfs-max-cache-age=300h

Everything works great on my end. I also replaced mergerfs with rclone union. I found one issue with that, which was the dir-cache, having a high cache time prevented imports because new files on local filesystem not being seen. Is there a way to prevent local directory caching when using union? I have since reverted back to the default, 5m to alleviate the issue.

I could probably get away with just using the remote directory without the union, but then I lose the ability to control when files upload. If I could control an upload interval on the mount, I could just use that. As anyone tried that and ran into issues?