After two days of backup, I’ve tested on rclone the destination size and source size it gave me the same reading of size and number of objects (392GB). When I use the mac’s finder to check the source’s size, it gives me 421GB. Any reason why this is happening?

392 GiB = 421 GB

do:

rclone check <source> <destination>

to see if they actually match

rclone uses binary based SI prefixes everywhere, so your calculation is correct.

= 394 *1.024*1.024*1.024

394 *1.024*1.024*1.024 = 423.054278656

Note that you can use rclone size to see the size of what you uploaded. rclone check is a great idea too.

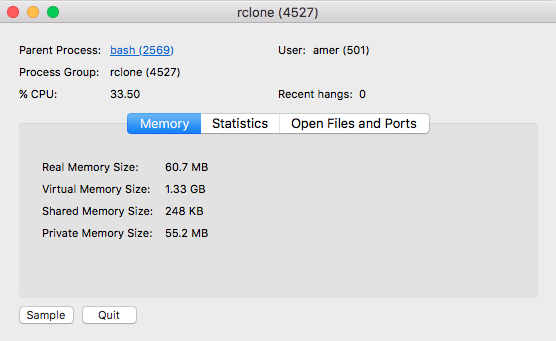

@ncw is it normal that it takes a lot of time to get the result when you execute the check command? It took more than 30 minutes and I had to cancel it, also I’ve noticed high CPU usage during this command.

Estimated size on remote? I just checked 2.8 TB. Took about 20sec

2017/01/21 15:42:30 Local file system at /home/st0rm/movies: Building file list

2017/01/21 15:42:53 Encrypted amazon drive root ‘x’: Waiting for checks to finish

@St0rm I have only 420 GB on remote and it’s taking forever, though I have a fast connection. This is command I am executing: rclone check [source] [destination]. When “waiting for checks to finish” starts, rclone CPU usage goes up.

I had to force quit rclone due to high CPU usage, slows down the whole laptop.

Yes - rclone needs to calculate the md5sum of every local file you are checking.

Add --stats 1m and every 60 seconds rclone will print what it is doing, or add -v for lots of debug output.

Calculating the md5sum takes a lot of CPU.

md5 sums dont get checked on crypt remotes (only file size comparison I guess?), which is probably why it’s so much quicker to check for some of us that use encryption